Keras

experiments with the Keras #DeepLearning library for Python

Introduction

This is a whirlwind post that is meant to provide:

- pointers to basic environment setup

- quick ramp on neural nets using the XOR problem

- simple training for the MNIST digit recognition task

- samples involving OpenAI’s Gym environment for reinforcement learning tasks

It is not intended to provide:

- background on neural networks, deep learning, or underlying mathematics

- network architectures and hyperparameter tuning

- deep exploration of libraries, APIs, object models, and tuning

Links are provided to source blog posts that go into more detail on each of these examples (including some of the underlying theory/math/background).

Our future posts will build on this basic foundation as we tackle more realistic problems using Keras.

Prerequisites and Setup

Sample Code

Sample code is maintained in the keras repo

Abstractions

The primary benefit of Keras is that it abstracts the various backends, such as TensorFlow, Theano, and CNTK. It provides a “user friendly” API for dealing with deep neural network architectures commonly found in today’s CNNs and RNNs.

Data Abstractions

Data in deep learning is represented as tensors of various dimensions, e.g., 0D (scalar), 1D (vectors), 2D (matrices), nD (tensors).

They also have a shape that indicates how to interpret the collection of data elements, i.e., how many dimensions the tensor has along each axis. For example, to express 3 4x4 matrices has a shape of (3,4,4).

The elements also have a primitive data type, such as uint8 or float32.

Data Operations

Tensors are manipulated via operations, such as computing dot-products, matrix multiplication, or reshaping.

TensorFlow Backend and GPUs

We will focus on using TensorFlow as the preferred backend, taking advantage of the local machine’s GPUs, if any. To test this setup, from within your configured environment (see above):

source activate keras

cd keras/gpu-test

python main.py

This code runs a simply Python script that prints its device configuration:

from tensorflow.python.client import device_lib

print(device_lib.list_local_devices())

On my MacBook Pro, I get:

name: "/device:CPU:0"

device_type: "CPU"

memory_limit: 268435456

While on my Linux desktop with two GeForce GTX 1080 Ti GPUs , I get:

name: "/device:CPU:0"

device_type: "CPU"

memory_limit: 268435456

name: "/device:GPU:0"

device_type: "GPU"

memory_limit: 10921259828

physical_device_desc: "device: 0, name: GeForce GTX 1080 Ti, pci bus id: 0000:17:00.0, compute capability: 6.1"

name: "/device:GPU:1"

device_type: "GPU"

memory_limit: 10672223028

physical_device_desc: "device: 1, name: GeForce GTX 1080 Ti, pci bus id: 0000:65:00.0, compute capability: 6.1"

XOR

A classic non-linear problem that motivates the multi-layer perceptron is the simple logical exclusive-OR (XOR), which is true when the one of the two inputs is true (but not both):

http://www.ece.utep.edu/research/webfuzzy/docs/kk-thesis/kk-thesis-html/node19.html

With NumPy

There is a nice implementation of this using nothing more than numpy described in A Neural Network in 11 lines of Python (Part 1), which reduces to:

X = np.array([ [0,0,1],[0,1,1],[1,0,1],[1,1,1] ])

y = np.array([[0,1,1,0]]).T

syn0 = 2*np.random.random((3,4)) - 1

syn1 = 2*np.random.random((4,1)) - 1

for j in xrange(60000):

l1 = 1/(1+np.exp(-(np.dot(X,syn0))))

l2 = 1/(1+np.exp(-(np.dot(l1,syn1))))

l2_delta = (y - l2)*(l2*(1-l2))

l1_delta = l2_delta.dot(syn1.T) * (l1 * (1-l1))

syn1 += l1.T.dot(l2_delta)

syn0 += X.T.dot(l1_delta)

(We have used a slightly different implementation in our sample.)

To run:

source activate keras

cd keras/xor-numpy

python main.py

With Keras

This simple model can be translated into Keras following Understanding XOR with Keras and TensorFlow:

import numpy as np

from keras.models import Sequential

from keras.layers.core import Dense

# the four different states of the XOR gate

training_data = np.array([[0, 0], [0, 1], [1, 0], [1, 1]], "float32")

# the four expected results in the same order

target_data = np.array([[0], [1], [1], [0]], "float32")

model = Sequential()

model.add(Dense(16, input_dim=2, activation='relu'))

model.add(Dense(1, activation='sigmoid'))

model.compile(loss='mean_squared_error',

optimizer='adam',

metrics=['binary_accuracy'])

model.fit(training_data, target_data, epochs=500, verbose=2)

print("prediction: {}".format(model.predict(training_data).round()))

To run this example:

source activate keras

cd keras/xor-keras

python main.py

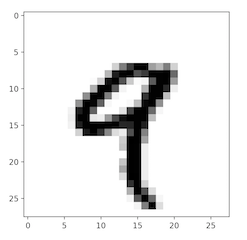

MNIST

Another classic problem used determine the worhtiness of a machine learning algorithm is recognizing handwritten digits, known as MNIST.

Keras has built-in support for this dataset, so makes working with it quite easy.

Matplotlib

It is often useful to visualize various aspects of the problem during solution development or processing. A common Python library is matplotlib. In this sample, we simply load the MNIST training data and render one of the samples:

from keras.datasets import mnist

import matplotlib.pyplot as plt

(train_images, train_labels), (test_images, test_labels) = mnist.load_data()

print("training data = dims: {}, shape: {}, dtype: {}".format(

train_images.ndim, train_images.shape, train_images.dtype))

digit = train_images[4]

plt.imshow(digit, cmap=plt.cm.binary)

plt.show()

To run:

source activate keras

cd mnist_plt

python main.py

A successful run of the matplotlib should produce a rendering like:

Classifying Digits

The seoncd MNIST sample has been slightly modified from MNIST Handwritten digits classification using Keras to train a model that is capable recognizing hand-written digits.

To run:

source activate keras

cd mnist

python main.py

The full training run will produce a JSON-based model file and console output indicating the accuracy:

Test loss: 0.02734791577121632

Test accuracy: 0.9917

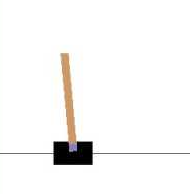

OpenAI Gym

From the website:

OpenAI Gym is a toolkit for developing and comparing reinvorcement learning algorithms.

To get started with this example (gym-simple), import the packages:

import gym

import gym.spaces

Environments

The core abstraction of Gym is that of an environment that can be used to solve reinforcement learning problems. These include pendulum experiments (CartPole-v0), video games (MsPacman-v0), etc.

Installed environments can be listed:

from gym import envs

print("Environments: {}".format(envs.registry.all()))

Observations

An observation is an environment-specific object representing what your agent perceives of the environment, such as raw pixel values, joint angles and velocities, etc.

Actions

After reflecting on an observation and deciding what to do, your agent performs an action in the environment during a step, which produces a new observation along with a reward.

Spaces

Every environment includes an observation space and an action space, which describe their valid formats.

# load an environment

env = gym.make('CartPole-v0')

print("Action space: {}".format(env.action_space))

print("Observation space: {}".format(env.observation_space))

To run:

source activate keras

cd keras/gym-simple

python main.py

After the catalog of environments, you should see the output of the space definitions:

Action space: Discrete(2)

Observation space: Box(4,)

And an animation of something resembling:

Conclusion

This was mainly a dip of the toe into these rich topics. We’ll be digging into more of the details as we attempt to actually constrct deep nets to solve some more interesting problems.

License

MIT © 2018 Donald Thompson